Data Future¶

Your present data for the future.

Data Future is a service that ensures that your current scientific data is preserved and remains accessible over time. It applies procedures based on advanced and standard data preservation specifications.

Main Features¶

Simple and flexible for data producers

Designed to support large amounts of scientific data

Responsible for the content of deposited data

Shorter or longer periods of time (some years to decades)

Complement to the F.A.I.R and Open Science process

Apply formal OAIS preservation standards and best practices

Enable customized publication

Note: this is a draft document, the content is in progress

That it does not do¶

Data curation or apply F.A.I.R principles to data (this is done by data producer)

Legal deposit of digital documents or administrative records

Probative value (legal purposes of proof)

Preservation ad vitam aeternam

Who can use Data Future?¶

IN2P3 researchers, laboratories and projects

IN2P3 partners with previous agreement

What is Data Future?¶

Data Future is the IN2P3 data preservation service, it is more than just the storage of data!

Data Future is a service provider that carries out complex preservation activities. It acquires, processes and stores data files from research projects. It is responsible for the content of deposited data and ensures that it is conserved by defined period of time and remains available and accessible over time. Data Future can disseminate data (i.e., makes them available) providing technical resources.

In a nutshell, Data Future provides all the possible support for sharing research data and keeping them FAIR principles (Findable, Accessible, Interoperable, and Reusable) over time.

How Data Future works?¶

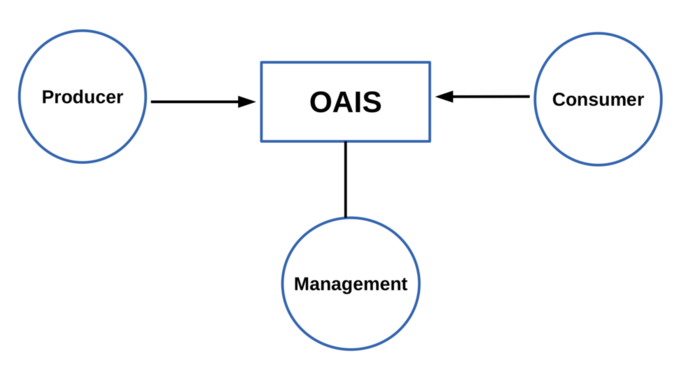

Data Future is an organization (people and systems) that adapts the OAIS Reference Model and other standard specifications to preserve and manage huge scientific datasets.

Therefore, it continually evaluates technological changes and user community requirements to ensure that data remains available and accessible over time.

What standards Data Future use?¶

The Data Future service specifications are based on common, international standards for transmitting, describing and preserving digital data. The main standard is the OAIS Reference Model which has Information Packages as its basis. The pan-European standard for packaging data and metadata for long-term storage is the Common Specification for Information Packages , and the best known and most widely used metadata standard Dublin Core a basic, domain-agnostic standard which can be easily understood and implemented.

What are differences between Preservation and Backup ?¶

There is an important distinction between preservation and backup.

Recognize the difference between preservation and backup, and understand key indicators that are critical for achieving your goals. In our context, it could be stated that the main differences are:

Preservation |

Backup |

|

|---|---|---|

Objective |

long term conservation |

operational continuity |

Target data |

precious or finished |

in working or in progress |

Retention period |

long-term (years,decades) |

short (days,weeks,months) |

Scope |

only selected data |

potentially all data |

Creation process |

semi-automatic (curation) |

automatic (humanless) |

Multiple copies |

various geographical locations |

one geographical location |

Modifiable content |

rare |

frequently |

Frequency creation |

one time (one version) |

many times (many versions) |

Used technologies |

open and free |

proprietary |

Sotfware dependency |

weak |

strong |

Interoperability |

high |

low |

Restitution time |

non critical |

critical (short) |

Cleaning/removing |

non-automatic (human) |

automatic (humanless) |

Disaster recovery support |

strong |

weak |

Audience |

external or dissemination |

internal |

At IN2P3 Computer Centre the preservation and backup are separate services:

Preservation: Data Future

Backup: Backup Service

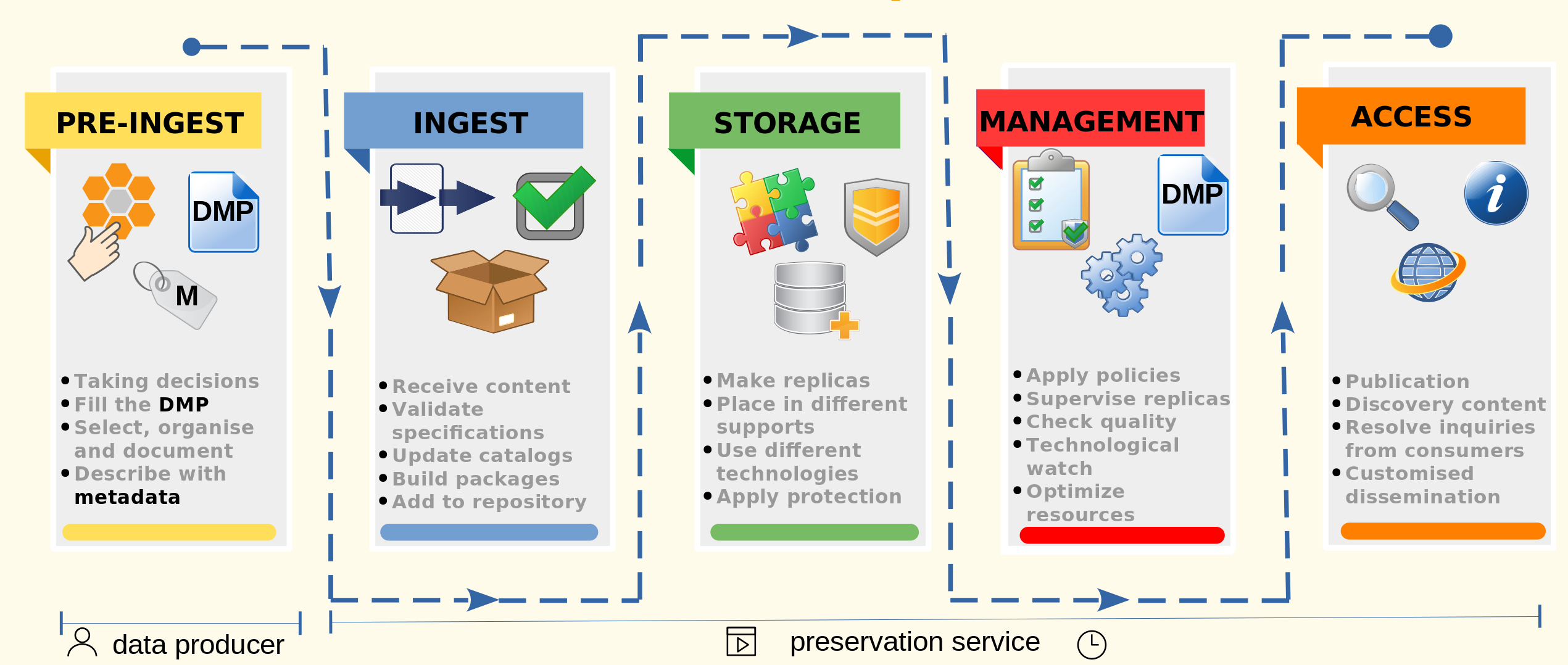

How is the Data Future preservation process ?¶

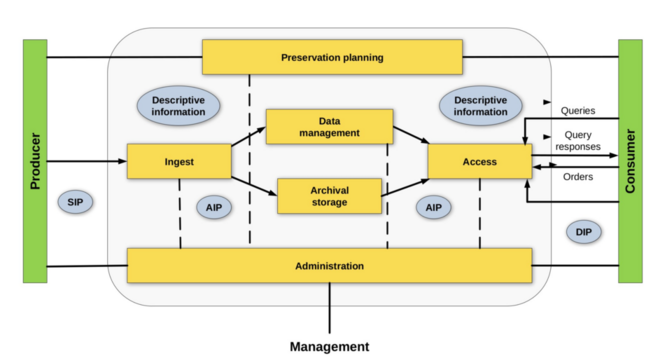

The preservation processes is based on OAIS Reference Model and it is composed in the following five steps :

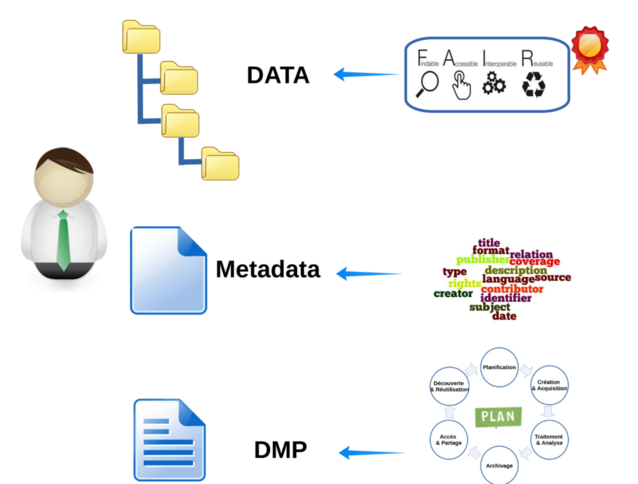

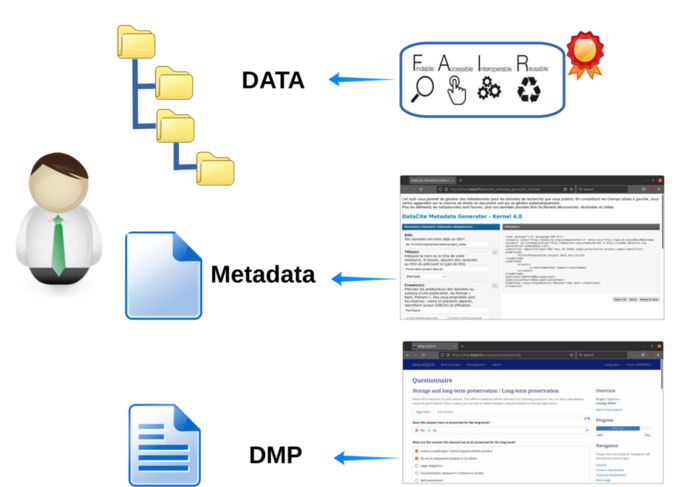

Pre-Ingest

Pre-Ingest is performed by the data producer. It covers taking main decisions concerned with data in the future (purpose, responsible contacts, owners, licenses, publication,… ). Selection, organization and documentation of dataset to conserve. Create necessary metadata that describes the dataset. Finally writing a Data Management Plan (DMP) to guide actions related with governance and conservation.

Ingest

The ingest process involves receiving dataset from data producer. An automatic validation is performed against the preservation specifications. Descriptive and administrative catalogs are updated with metadata and supervisory information. Preparation and elaboration of Archival Information Packages (AIP) for delivery to storage infrastructure. Finally content is added to main data repository.

Storage

Several copies or replicas are done on different geographical locations. Different technologies are employed to support these copies. Protection policies are applied to datasets.

Management

It applies policies for managing access. Supervise and monitor replicas on different locations. Perform periodical quality checks like as data integrity. A continuous technological watch is performed on storage technologies and infrastructure. Planning content migration to optimize resources.

Access

Enable dataset publication. Implements methods to support advanced discovery content. Receive and resolve inquiries from consumers. Support customized dissemination of large datasets.

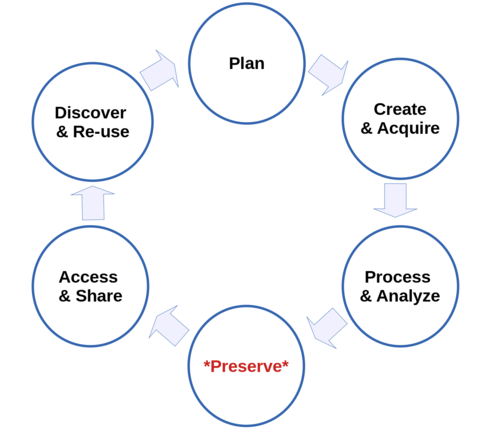

Why preservation in research data lifecycle?¶

Some research data has a longer longevity than the project. The research data lifecycle illustrates the stages of data management and describes how data flow through a research project from start to finish and beyond. Data management refers to the process of deciding and describing how data will be collected, organized, preserved and shared.

Some benefits of managing research data lifecycle are:

Find and understand data when needed

Project continuity through researcher or staff changes

Organized data saves time and money

Reduces risk of lost or mis-used data

Comply with funder and community requirements

Allows for easier validation of results

Plan

Here is necesary identify the data that will be collected or used in the research project, and will plan for data management throughout the lifecycle. This is the stage at which a Data Management Plan (DMP) would be created. Many public funders of research ask for a DMP to be submitted as part of a research application.

Create and Acquire

This is the stage at which experiments are carried out, observations made, data created or acquired,… This will involve documentation of data producer methods and instruments and information necessary to interpret and use/re-use the data.

Process and Analyze

Data once acquired will need to be processed in order to be usable. This might involve cleaning data to eliminate noise, combining data from multiple sources, transforming data from one state to another, and using procedures to validate or quality-control data. Any data processing will need to be described and documented, such that the end result could be replicated from the raw data.

Data analysis includes the raw materials of research are interrogated to produce the insights that constitute the research findings, which will be written up and published in research publications. Instruments and methods used for analysis should be described and documented; code written for purposes of data analysis and interpretation may need to be preserved and made available in support of research results.

Preserve

Towards the completion of research project it will preserve for the future data that corroborate the research findings and have long-term value. Data will need to be prepared for preservation and re-use. In many cases this will involve deposit of the digital data in a suitable data centre. Preservation activities may involve quality assurance of data, file format conversion, creation of metadata records with assignment of Digital Object Identifiers (DOIs) to datasets, licensing datasets for re-use, and putting in place any required access controls. The preservation service ensure they are stored and preserved properly.

Access and Share

Scientific publications based on data should include a data citation or a statement indicating where and on what terms the data can be accessed. The data preservation service will enable discovery of the data in its charge by exposing the metadata, and will provide access to the dataset when this is permitted. Data may be made publicly available, or restrictions on access may be imposed.

Discover and Re-use

Data that are available for discovery and access may be re-used by other researchers, either to corroborate the findings of the original research, or to generate new insights through further examination and analysis. At this stage the data may become raw materials acquired within a new cycle of research.

What are advantages of planning your research data management?¶

Advantages to planning your research data management practices in advance

Save time and money: Planning ahead for your data management needs will let you anticipate what you will need and organize your data from the start - no last minute.

Maintain data utility: Managing and describing your data throughout the entire project will allow you and others to understand and more easily use your data in the future.

Meet grant requirements: Many funding agencies require that researchers create and follow a data management and/or data sharing plan.

When to start preserving the data for re-use in the future?¶

To increase the probability that the data will be effectively re-used in the future, the data must be curated before then are produced. This means that the reuse and preservation are sufficiently anticipated and planned. In this way the effort and costs of curing the data can be greatly reduced while the quality of the data is enhanced.

If preservation is only planned once the project has finished, there is a strong unsuccessful probability in the preservation process and in future reuse of data.

What is data Curation ?¶

Data curation is the organization and integration of data collected from various sources. It involves annotation and description of the data such that the value of the data is maintained over time, and the data remains available for reuse and preservation.

Specifically, data curation is the attempt to determine what information is valuable preserve and for how long.

The data producer, rather than the data repository itself, typically initiates data curation and provides metadata. Data curation activities enable data discovery and retrieval, maintain quality, add value, and provide for re-use over time.

There is a difference between Curation and F.A.I.R principles?¶

From preservation process view there is no difference between curation and FAIR principles applied to research data. Properly curated research data should agree to the four core F.A.I.R guiding principles. Data curation ensures that datasets are complete, well-described, and in a format and structure that best facilitates long-term access, discovery, and reuse. Thus, data producers (researchers) provide necessary information to make data more Findable, Accessible, Interoperable and Reusable by aligning with the F.A.I.R principles.

The F.A.I.R principles do not prescribe any particular technology, standard, or specification, but rather act as a guide to data producers to aid them in evaluating whether their curation work are sufficient to render their data compliant.

Please use practical tutorials and resources proposed by your scientific community that assist any researcher in performing data curation to enrich a research dataset consistently with the FAIR principles principles.

The curation process involves a review of a dataset and documentation to ensure the data are as complete, understandable, and accessible as possible. Curation process is not peer review and do not judge the core scientific analysis, methodologies, or conclusions behind the data. Instead, the purpose of review is to ensure metadata relevance, and data usability and discoverability for a target scientific community.

What is Metadata?¶

Metadata is a shorthand representation of the data to which they refer. Metadata summarizes basic information about data, making easier finding and working with particular instances of data. Metadata can be created manually to be more accurate, or automatically and contain more basic information.

Data quality is connected to the provenance of that data. Without metadata to provide provenance, a dataset has not context. A dataset without context has small value.

International standards apply to metadata. Much work is being accomplished in the international standards communities, an example is Dublin Core standard.

The Dublin Core is a set of 15 main metadata items for describing digital or physical resources for the purposes of discovery. Each element is optional and may be repeated. The original set of 15 classic metadata terms, known as the Dublin Core Metadata Element Set are:

Element

Commentary

1

Title

A name given to the resource

2

Creator

An entity primarily responsible for making the resource

3

Subject

The topic of the resource

4

Description

A presentation of content of the resource

5

Publisher

An entity responsible for making the resource available

6

Contributor

An entity responsible for making contributions to the resource

7

Date

A point or period of time associated with an event in the lifecycle of the resource

8

Type

The nature or genre of the resource

9

Format

Digital or physical representation of the resource

10

Identifier

An unambiguous reference to the resource within a given context (DOI,URI,…)

11

Source

A related resource from which the described resource is derived

12

Language

A language of the resource

13

Relation

A related resource

14

Coverage

The spatial or temporal topic of the resource,the spatial or jurisdiction applicability

15

Rights

Information about rights held in and over the resource

What is an Information Package?¶

The core of data preservation service is structured by Information Package specifications which describe a common structure for storing data and metadata in a platform-independent, authentic and long-term understandable way. The specifications are ideal for migrating long-term valuable data between generations of information systems, transferring data to dedicated long-term repositories (i.e. digital archives), or preserving and reusing data over extended (and shorter) periods of time and generations of software systems.

An information package, according to the OAIS Reference Model, contains the content to preserve along with descriptive and technical metadata.

Please look Appendix C Information Packages structure

Which are the Information Packages?¶

There are three categories of the Information Packages:

Submission Information Package (SIP), is sent to preservation service by a producer.

Dissemination Information Package (DIP), the output of the service and

Archival Information Package (AIP) , the internal format managed by the service during long-term preservation.

Please look Appendix B OAIS functional model diagram

I need create a Submission Information Package?¶

If dataset is present already at CC-IN2P3 the building SIP can be skipped. Instead a similar process will done to create AIP directly.

I need an Agreement to use Data Future?¶

Yes, a prior agreement is mandatory between parties: data producer and Data Future service.

Contact

This first is a meeting between data producer and the Data Future in order to discuss about requirements of the project.

The objective is to bring together the needs and the offer of Data Future service.

Evaluation

For the this meeting, the data producer is asked to provide a first version of the Data Management Plan (DMP) that describes different aspects in relevance with management of dataset to preserve over time.

An evaluation and validation of requirements will be done between producer and Data Future. Details or adjustments can be necessaries on DMP.

During this meeting, an agreement draft can be established.

Testing and adjustment

Additional meetings can be necessary to test the Pre-Ingest and Ingest steps of preservation process. The tests could include login, ingest, validity check and workflow control.

Environment and legal aspects¶

Under the law of the French Republic, the scientific data produced by public establishments (CNRS, IN2P3, etc.) are public archives so their preservation is mandatory.

“Archives are all documents, including data, regardless of their date, place of storage, form and medium, produced or received by any natural or legal person and by any public or private service or body. in the exercise of their activity.”

Article L211-1 Code du patrimoine

“The public archives are: a) the documents which result from the activity, in the context of their mission of public service, of the State, the regional and local authorities, the public establishments and the other legal persons of right public or persons governed by private law entrusted with such missions […]”

Article L211-4 Code du patrimoine

The preservation (archiving) and valorization of scientific data is part of IN2P3 missions.

“For the realization of these missions, the National Institute of Nuclear Physics and Particle Physics: […] - coordinates the implementation of information systems allowing the storage, the provision to the scientific community, the processing and the valorization of all the scientific data concerned, as well as their archiving.”

Arrêté of 29th April 2016 relating to IN2P3 of CNRS.

data producer requirements¶

Data producer, DMP and metadata¶

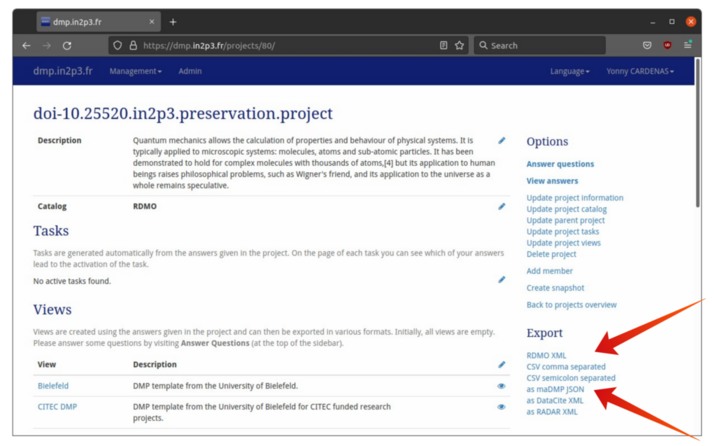

Use the service IN2P3 Data Management Plan to create and export your Data Management Plan

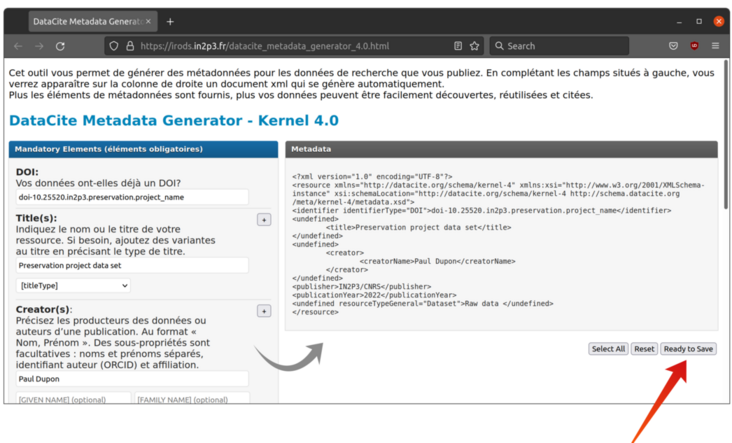

This form Metadata Generator is available to create and save your Dublin Core Metadata

Appendices¶

Appendix A OAIS environment diagram¶

Appendix B OAIS functional model diagram¶

Appendix C Information Packages structure¶

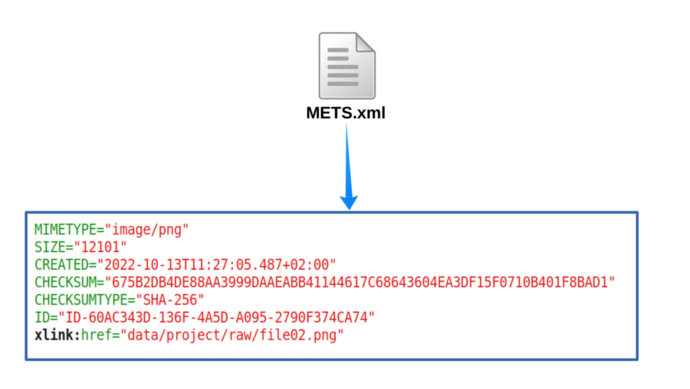

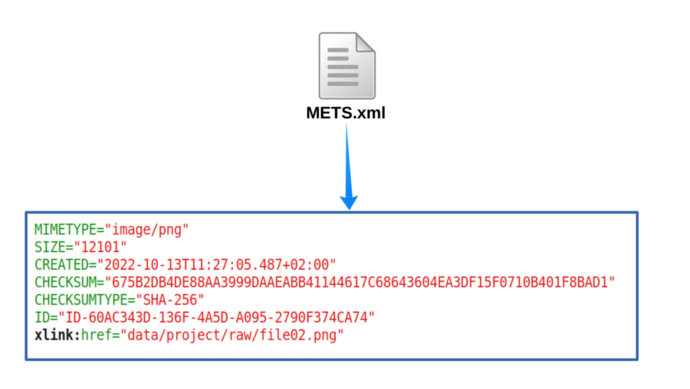

Appendix D METS section example¶

Appendix E exporting DMP to several formats¶

Appendix E saving Dublin Core to file¶

Contact¶

Please contact us via ticket at: https://support.cc.in2p3.fr

References¶

Common Specification for Information Packages

Metadata Encoding and Transmission Standard

commons-ip E-ARK IP validation and manipulation tool and library